Cyber-attacks, evolving privacy and intellectual property legislation, and ever-increasing regulatory obligations are now simply “the new normal” – and the implications for development organizations are unavoidable; application risk management principles must be incorporated into every phase of the development lifecycle.

Organizations want to work smart – not be naïve – or paranoid. Application risk management is about getting this balance right. How much security is enough? Are you even protecting the right things?

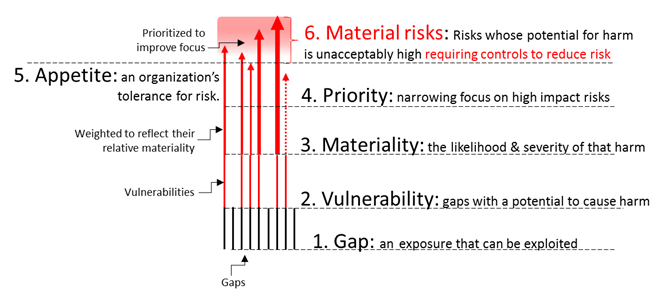

The six degrees of application risk offer a basic framework to engage application stakeholders in a productive dialogue – whether they are risk or security professionals, developers, management, or even end users.

With these concepts, organizations will be in a strong position to take advantage of the following risk management hacks (an unfortunate turn of a phrase perhaps) that reduce the cost, effort, complexity, and time required to get your development on the right track.

Six Degrees of Application Risk

The following commonly used (and related) terms provide a minimal framework to communicate application risk concepts and priorities.

- Gaps are (mostly) well-understood behaviors and characteristics of an application, its runtime environment, and/or the people that interact with the application. As an example, .NET and Java applications (managed applications) are especially easy to reverse-engineer. This isn’t an oversight or an accident that will be corrected in the “next release.” Managed code, by design, includes significantly more information at runtime than its C++ or other native language counterparts – making it easier to reverse-engineer.

- Vulnerabilities are the subset of Gaps that, if exploited, can result in some sort of damage or harm. If, for example, an application was published as an open source project – one would not expect that reverse engineering an instance of that application would do any harm. After all, as an open source project, the source code would be published alongside the executable. In this case, the Gap (reverse engineering) would NOT qualify as a Vulnerability.

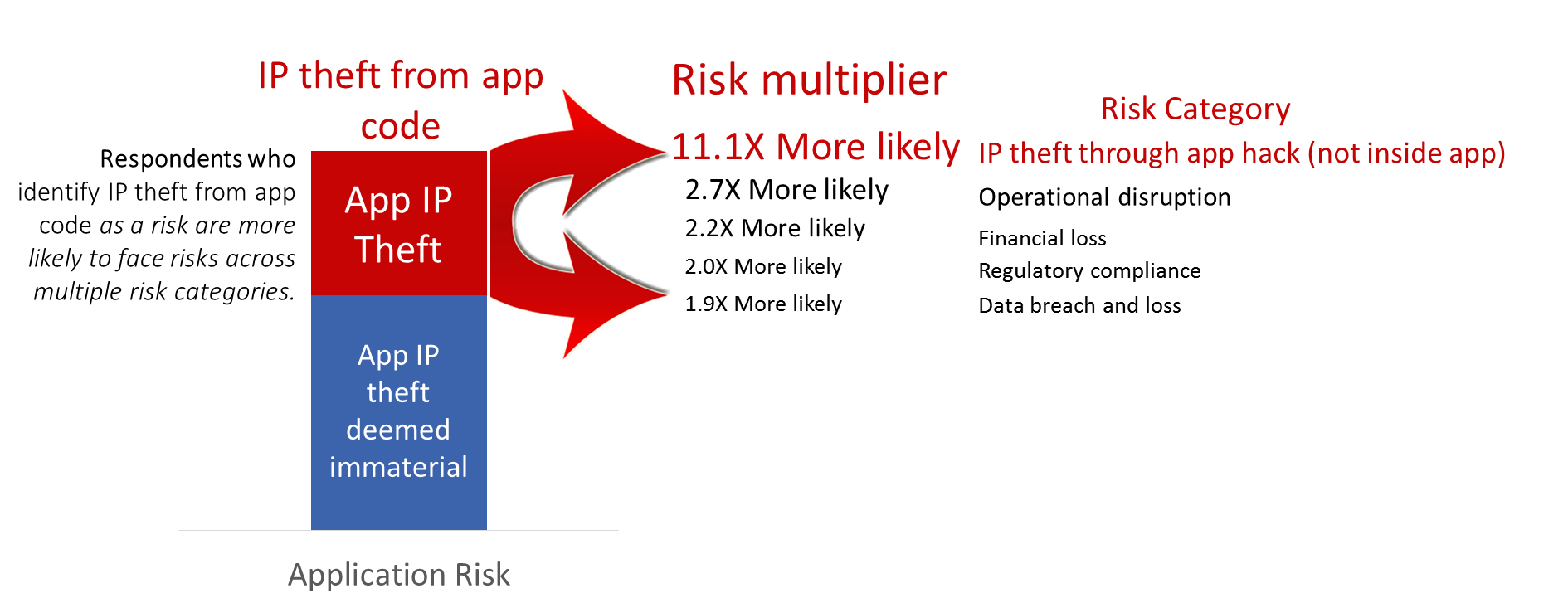

- Materiality is the subjective (but not arbitrary) assessment of how likely a vulnerability will be exploited combined with the severity of that exploitation. The likelihood of a climate-changing impact of a meteor hitting earth in the next 3 years is significantly lower than the likelihood of an electrical fire in your home. This distinction outweighs the fact that a meteor impact will obviously do far more harm than a single home fire. This is why we, as individuals, invest time and money preventing, detecting, and impeding electrical fires while taking no preemptive steps to mitigate the risks of a meteor collision.

- Priority ranking of vulnerabilities helps to ensure that our limited resources are most effectively allocated. Vulnerabilities are not all created equal and, therefore, do not justify the same degree of risk mitigation investment. Life insurance is important – but medical insurance typically is seen as “more material” justifying greater investments.

- Appetite for risk is another a subjective (but not arbitrary) measure. Appetite is synonymous with tolerance. Organizations cannot eliminate risk – but each organization must identify those vulnerabilities whose combined likelihood and impact are simply unacceptable. Some sort of action is required to reduce (not eliminate) those risks to bring them to within tolerable levels. Health insurance does not reduce the likelihood of a health-related incident – it reduces some of the harm that stems from an incident when it occurs. While many individuals have both life and health insurance, there are many who feel that they can tolerate living without life insurance but cannot tolerate losing health insurance.

- Material risks are those vulnerabilities whose risk profile are intolerably high. Material risks are, by definition, any vulnerability that merits some level of investment to bring either its likelihood and/or its impact down to within tolerable levels. Ideally, once all risk management controls are in place, there are no “intolerable risks” looming.

Applying the Six Degrees of Application Risk

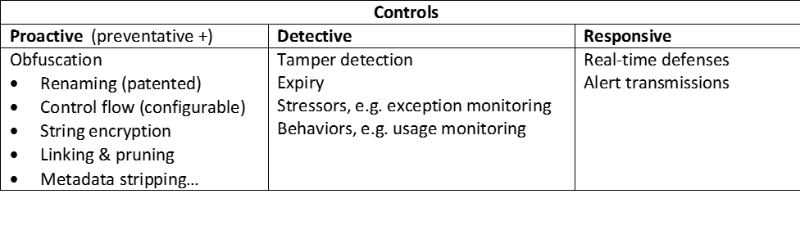

Extending these concepts into the development process, at a high level, translate into the following activities:

- Inventory relevant “gaps” across your development and production environments

- Identify the vulnerabilities within the collection of gaps

- Assess and prioritize according to your organization’s notions of materiality

- Agree on a consistent definition of your organizations tolerance for these vulnerabilities (appetite)

- Identify the vulnerabilities that present a material risk

- Select and implement controls to mitigate these risks

- Measure, assess, and correct on an ongoing (periodic) basis

Simple right?

Effective Application Risk Management Hacks

Incorporating any new process or technology into a mature development process is, in and of itself, a risky and potentially expensive proposition.

The threat of increasing development complexity or cost, or compromising application quality or user experience is often motivation enough to maintain the status quo.

Avoid unnecessary waste and risk – follow-the-leaders

There is an old saying in risk management that “you don’t have to be the fastest running from the bear – you just don’t want to be the slowest.” Hackers mostly attack targets of opportunity and regulators and the courts typically look for “reasonable” and “appropriate” controls. It is often much more efficient to benchmark and adapt the practices of your peers rather than develop your own risk management and security practices from the ground-up. There are many sources from which to choose.

- Benchmark your practices against your organization’s

- peers (similar organizations)

- customers (their risks are often, by extension, your risks)

- suppliers (they are experts in their specialty and/or may pose a risk if they do not live up to your appetite for risk)

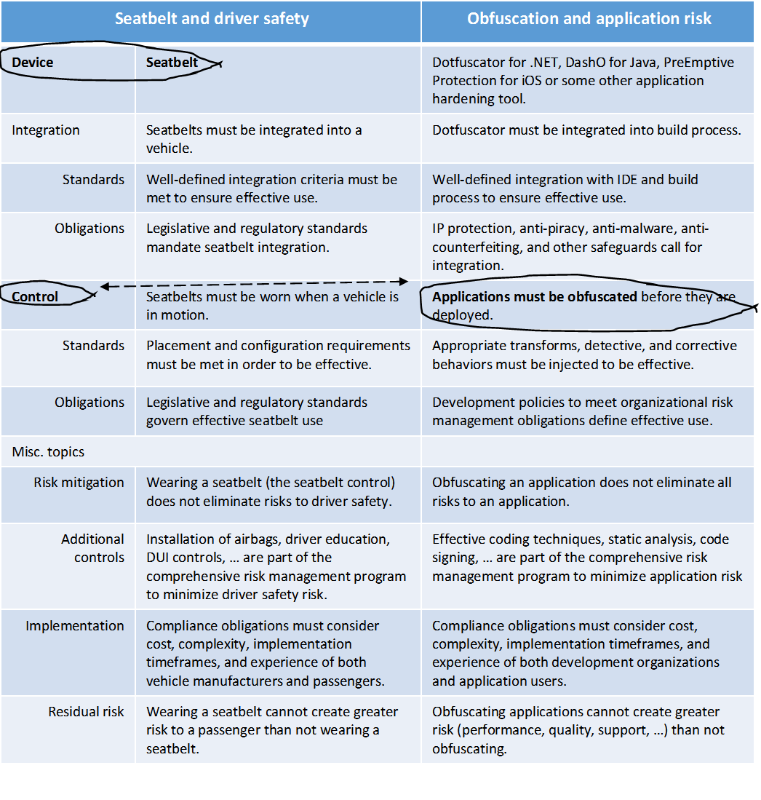

- Embrace well-understood and common practices

- Adopt an accepted a standard or open risk management framework.

- Monitor regulatory and legislative developments

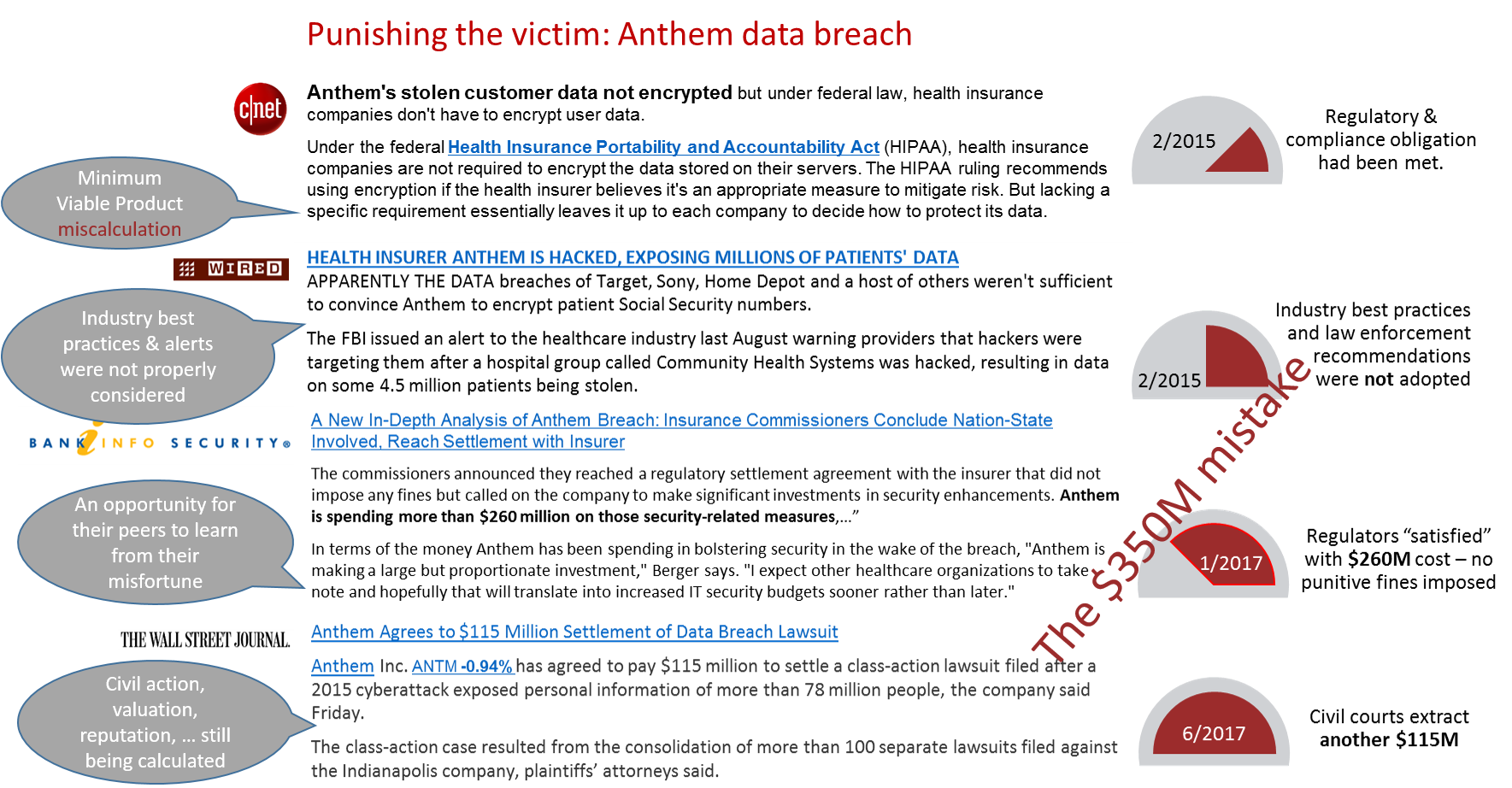

- Track relevant breaches and exploits and the aftermath